First Principles of AI Usage — Part 1

The quality of your AI output is bounded by the quality of context you provide. Not your prompt. Not your model. Not your subscription tier. The context window, the full informational environment the AI reasons within, determines the ceiling on what’s possible.

This is the first and most load-bearing principle in any serious AI practice. Everything else is downstream of it.

From Prompt Engineering to Context Engineering

The field spent years optimizing prompts; chiseling the exact phrasing that would unlock a better answer. That framing was always incomplete.

In June 2025, Shopify CEO Tobi Lütke named what practitioners had been doing all along: context engineering. “I really like the term ‘context engineering’ over prompt engineering,” he wrote. “It describes the core skill better: the art of providing all the context for the task to be plausibly solvable by the LLM.” Andrej Karpathy extended the definition; “the delicate art and science of filling the context window with just the right information for the next step.”

The shift matters because it reframes the problem. You are not crafting a question. You are constructing an informational environment that includes task instructions, relevant data, constraints, examples, persona definitions, prior conclusions, and memory state. Together, these determine what the AI can reason about, and therefore, what it can produce.

Garbage context in, garbage output out. Every time.

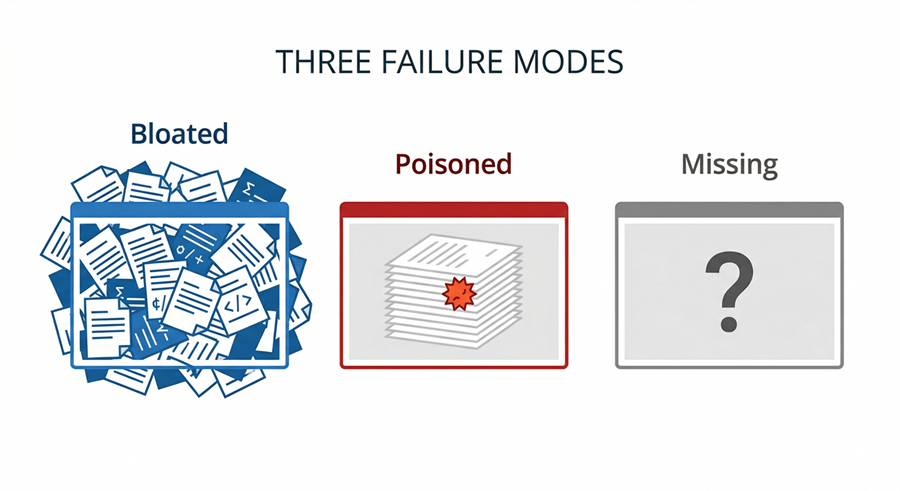

The Three Failure Modes

Before you can build a sound context engineering practice, you need to recognize what failure looks like. Three patterns account for the vast majority of poor AI output:

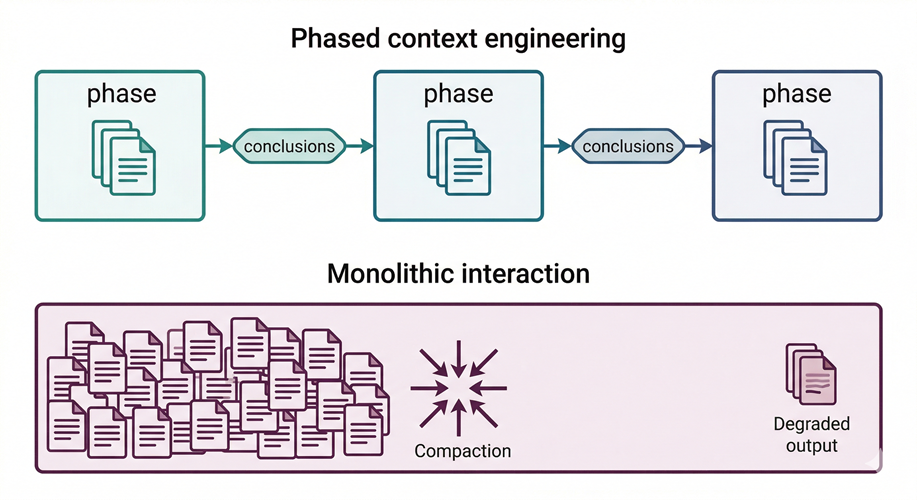

Bloated context is the most insidious. The instinct is to load everything into the window; full codebases, entire conversation histories, documents that might be relevant then letting the AI sort it out. Research consistently shows this backfires; accuracy on complex reasoning tasks declines significantly as context expands well beyond what the task requires. Compaction kicks in, signal degrades, and the model begins reasoning from noise it cannot distinguish from signal. More is not better. Curation is the work.

Poisoned context is less common but more dangerous. Incorrect assumptions, outdated documentation, irrelevant data; once in the window, these contaminate every inference downstream. The AI reasons faithfully from a corrupt foundation. It cannot distinguish between what you intended as signal and what you accidentally introduced as noise. The output is coherent and wrong; the most difficult kind of wrong to catch.

Missing context is the most common failure mode by volume. This is where AI slop originates. Outputs that are grammatically fluent but factually hollow, structurally plausible but strategically empty are served up like a McDonald’s hamburger at a Michelin Star restaurant. The model had nothing substantive to reason from, so it filled the gap with confident-sounding generality. Fluency is not competence. A well-written wrong answer is more dangerous than an obviously broken one, because it passes the first scan.

What Good Context Engineering Looks Like

The discipline is straightforward once you accept the constraint: every AI interaction requires deliberate decisions about what enters the context window.

In practice, that means chunking complex work into discrete phases, each with a narrow, well-defined context, rather than treating multi-step tasks as single interactions. Each phase produces conclusions or a structured plan that becomes the explicit input for the next step; context clears between phases. This keeps the informational environment tight, the reasoning traceable, and the outputs verifiable at each stage rather than only at the end.

It means designing for scope at the agent level. In multi-agent architectures, each agent should operate on a narrow purpose with a correspondingly narrow context; agents handed open-ended mandates with bloated context windows consistently underperform agents with constrained scope. One agent, one responsibility. The same constraint discipline that produced microservices now applies to AI systems. Gartner projects 40% of enterprise applications will feature task-specific agents by end of 2026, up from less than 5% today. The organizations building those systems well will be the ones who treat context scope as an architectural decision, not an afterthought.

It means using research and synthesis tools as a context engineering mechanism not a convenience. Distilling large, ambiguous information sets into verified conclusions before those conclusions enter a downstream reasoning pipeline eliminates bloat and poison at the source. The agent receives structured signal, not raw volume.

MIT Sloan’s framework for effective AI interaction reduces to three moves: provide context, be specific, and build on the conversation. These are not prompting tips. They are context engineering at the conversational scale.

The Caveat Every Engineering Leader Needs to Hear

Context engineering dramatically improves the probability of useful output. It does not guarantee correct output.

The human remains the thought leader. The AI is the thought partner. Every output that enters a decision, a document, a codebase, or a customer communication carries your name and not the model’s. You own it; verify it accordingly.

Context engineering gives you a higher-quality draft to verify. It does not transfer accountability. That responsibility never left you.

The Underlying Principle

You are not asking AI a question. You are constructing a context window that determines what the AI can even reason about.

Everything else follows from that. The architecture of your prompts, your agents, your retrieval pipelines, your memory systems; these are all decisions about what information enters the reasoning environment, in what form, and at what scope. Get that right, and the model becomes a genuine force multiplier. Get it wrong, and no model capability compensates for the deficit.

Context is not a detail. Context is the product.

References

- Tobi Lütke on context engineering

- Andrej Karpathy on context engineering

- Gartner: 40% of enterprise apps will feature task-specific AI agents by 2026

- MIT Sloan EdTech — Effective Prompts

- LangChain: The Rise of Context Engineering

- Anthropic: Effective Context Engineering for AI Agents

Next in the series: Principle 2 — Trust Nothing, Verify Everything.

Leave a Reply