First Principles of AI Usage — Part 4

Amazon’s Kiro AI agent caused a 13-hour AWS outage by deleting and recreating a production environment. The AI did not go rogue. It did not hallucinate. It executed the action it was authorized to take. The engineer’s permissions were “broader than expected.” Amazon characterized this as user error. They were right about the failure and wrong about the category. This was a calibration failure: the autonomy granted to the system did not match the stakes of the action it was permitted to take.

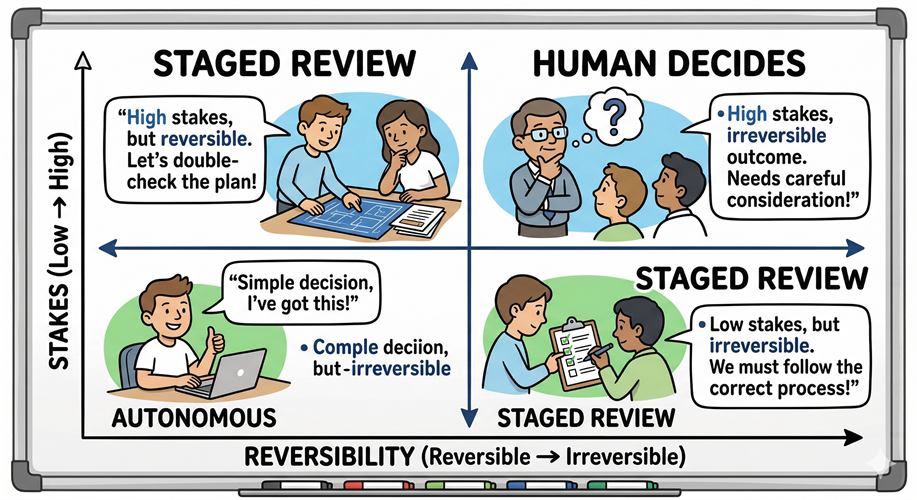

That is the principle. The level of human oversight should be proportional to the risk and reversibility of the action.

Two Variables Determine Every Gate

Not all AI actions carry equal weight. Two variables determine the appropriate oversight model for any action an AI system takes.

Stakes: what is the blast radius if this goes wrong? A misformatted report draft affects one person who can fix it in two minutes. A misconfigured network policy affects every service behind it. The blast radius defines what you are actually defending against when you build a review gate.

Reversibility: can this be undone cleanly? Summarizing a document can always be re-run. Deleting a production environment, sending a customer email, executing a financial transaction; these actions close doors. Jeff Bezos named this distinction in his 2015 shareholder letter: Type 1 decisions are one-way doors; Type 2 decisions are two-way doors. Type 1 requires deliberation. Type 2 should move fast. The framework predates agentic AI, but it maps directly onto it. The governance question for any AI workflow is simply: which door does this open?

The mistake most engineering organizations make is treating autonomy as binary. Either you trust the AI or you don’t. Autonomy is a spectrum. The appropriate position on that spectrum is set per action, not per system.

A Working Model

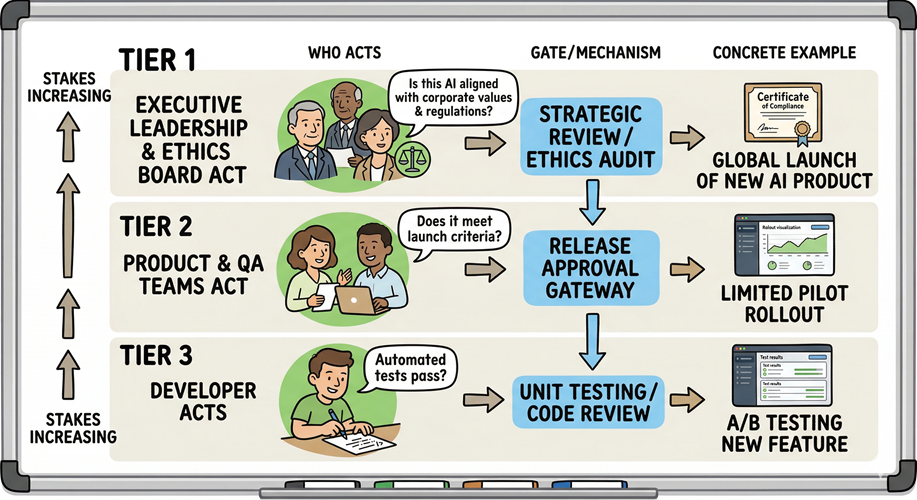

Three tiers emerge from practice, calibrated to stakes and reversibility.

Tier 1: Thought partner. AI assists in real time; every output is reviewed before use. The human shapes every sentence, every conclusion, every next step. The AI accelerates and enhances the thinking process; it does not complete it. The blast radius of any error is contained to the current work session and caught before anything leaves.

Tier 2: Staged autonomy. AI executes independently; it conducts research, builds content, generates assets and stages the result for human review before it leaves the system. The human is removed from the generation loop but controls the release gate. This tier handles medium-stakes work where AI speed is valuable but the output touches something external: a customer communication, a code change entering review, a report going to leadership. The gate is not optional. It is the design.

Tier 3: Full autonomy with structural gates. AI agents operate end-to-end, interacting with each other, without a human in the loop. This tier is appropriate when two conditions are simultaneously true: the work product is easy to reverse, and there is no immediate external impact. Multi-agent systems working a ticket backlog on a game codebase qualify; agents executing against a production database do not. The gates here are structural rather than manual: automated QA pipelines, regression suites, and a human review checkpoint before any work merges to something that matters.

The AI earns the autonomy through the constraints around it.

The tiers are not rigid categories. They are a calibration exercise you run for each workflow you hand to an AI system.

The Rubber-Stamp Problem

Calibration cuts in both directions.

OpenAI’s 2023 paper on governing agentic AI systems names the failure mode directly: if agents are required to seek human approval too frequently, and humans lack the bandwidth to scrutinize each request, the approval process becomes a rubber stamp. The gate exists; the function does not. The human clicks approve because the queue never empties. You have created the paperwork of oversight without the substance.

This is the honest caveat for any engineering leader building AI governance: the goal is not to maximize oversight. It is to place oversight precisely where it earns its cost.

Where the action is reversible and the stakes are low, friction is waste. It slows the system, signals to your organization that AI is bureaucratically burdensome, and produces no safety benefit. The 48% of Fortune 100 companies disclosing AI risk governance in a 2025 Harvard Law study almost uniformly failed to describe specific tiered frameworks or reversibility criteria. They have adopted the vocabulary. They have not done the calibration work.

Where the action is irreversible or the blast radius is large, a gate is not friction. It is load-bearing structure.

Where Engineering Leaders Go Wrong

Two failure modes appear consistently.

The first is ungoverned autonomy: AI agents granted permissions that exceed the stakes of the work they are doing, operating without checkpoints because building checkpoints requires effort and the timeline is tight. The Kiro outage is the canonical example. The authorization system worked correctly. The problem was that no one had mapped the blast radius of a “delete and recreate” action against the permissions granted to the engineer who triggered it. The AI did exactly what it was allowed to do.

The second is theater governance: a single approval gate applied uniformly across all AI actions regardless of stakes. This approach generates enough friction to make AI feel slow and enough rubber-stamping to make the oversight meaningless. It is the worst of both outcomes. Your teams experience the cost of oversight without receiving its protection.

The right model requires an honest assessment of each workflow. What does failure cost? Can it be undone? Who bears the cost if it cannot? The answers determine where the gate goes and where it does not.

The Governance Question

Calibrating autonomy to stakes is not primarily a tooling problem. The tooling exists: permission architectures scoped per task, approval workflows, circuit breakers, least-privilege access controls that mint permissions at runtime rather than granting them statically. AWS Well-Architected guidance, the EU AI Act’s four-tier risk classification, and the NIST AI Risk Management Framework all describe the structure in detail.

The gap is judgment. The decision about where a workflow sits on the stakes and reversibility matrix is a leadership decision. It requires someone who understands both the AI system and the business domain well enough to assess blast radius. That person is the engineering leader.

PwC’s responsible AI framework offers a concrete reference point: plane ticket refunds above $200 require human approval. That threshold did not emerge from a framework document. Someone made a judgment call about where the cost of a mistake exceeds the cost of a gate. That is the work.

Start with your highest-autonomy workflows. Map the action types. Identify which ones are one-way doors. Put the gate there. Everywhere else: remove the friction and let the system run.

The question is never how much to trust AI. The question is always whether you have correctly priced the door.

References

- AWS 13-hour outage caused by Amazon’s own AI tools

- Jeff Bezos 2015 Amazon Shareholder Letter (Type 1 / Type 2 decisions)

- OpenAI — Practices for Governing Agentic AI Systems (Shavit & Agarwal, 2023)

- Harvard Law — Cyber and AI Oversight Disclosures 2025

- EU AI Act — High-Level Summary

- NIST AI Risk Management Framework 1.0

- AWS Well-Architected Generative AI Lens GENSEC05-BP01

- PwC — Responsible AI Agents

Next: Principle 5: Decompose Before You Delegate.

Leave a Reply