First Principles of AI Usage — Part 9

AI is a multiplier. The thing it multiplies is you.

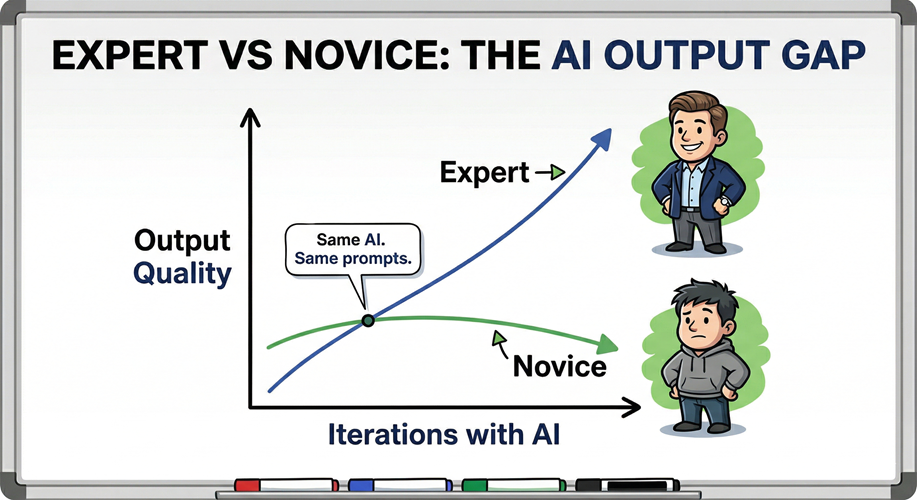

That framing has a sharp implication most teams prefer not to confront: multiplying a strong foundation produces outsized returns; multiplying a weak one produces outsized errors. The same tool, the same model, the same prompt structure — different output quality, entirely determined by the expertise of the person directing it. This is not a limitation of current AI. It is the structural constraint of the technology.

The Multiplier Equation

Peer-reviewed research in Cell/Patterns (2025) quantified what practitioners already suspected. Technical experts using AI showed an average performance improvement of approximately 45%. General employees using the same AI showed approximately 20%. Same model. Same interface. The variable was domain knowledge.

The mechanism is specific: experts use iterative refinement to progressively improve quality, while novices use the same cycles to progressively entrench flaws. Both groups engage in multi-turn dialogue. Only one group has the expertise to recognize which direction quality is moving. The research terms this a “multiplicative effect on the expert-novice gap.” AI does not close that gap. It widens it.

Stack Overflow’s March 2026 analysis of developer AI usage reached the same conclusion from a practitioner direction: domain expertise remains the primary determinant of AI output quality. Not the model. Not the context. Not the prompt technique. The person applying it.

What AI Cannot Do For You

AI can process information faster than you can read it. It can generate options you would not have considered. It can execute repetitive tasks at a scale no team can match manually.

It cannot understand your specific organizational context without being told. It cannot make value judgments that reflect your priorities. It cannot take responsibility for outcomes.

These are not temporary limitations waiting on the next model release. They are structural properties of the technology. An AI agent operating in your engineering organization does not know what “good” looks like for your team, your constraints, your customers, or your risk tolerance. You need to encode it accurately which requires that you know it first.

McKinsey’s trust framework for the age of agents is direct on this: humans provide judgment, ethics, and strategic direction; AI delivers speed, scale, and intelligence. That is not a philosophical statement about human dignity. It is an architectural description of how the system actually functions. Remove the human judgment layer and the system does not become autonomous. It becomes unguided.

The Highest-Risk Pattern

Using AI in domains where you lack expertise is the highest-risk pattern in AI adoption. It is also, predictably, one of the most common.

The failure mode is invisible by design. When AI fabricates plausible-sounding analysis in a domain you do not understand, the output reads as competent. You have no baseline to compare it against. You cannot detect the hallucination. You cannot evaluate the recommendation. You approve the output not because it is correct but because it is fluent; which returns us to the trust asymmetry described in Principle 2 of this series.

I ran into this directly in a personal project. I decided to build a procedurally generated 3D game; a significant leap from the 2D work I had done before. I built specialized AI agents, ran proofs of concept across different generation methodologies, refined standards and process documentation. The agent swarm was sophisticated. The scaffolding was sound.

It still could not make material progress.

The constraint was not the tooling. It was me. I did not know enough about procedural generation or 3D rendering to recognize a bad architectural decision from a good one, to evaluate whether the generated output was moving toward something viable or compounding a flawed assumption. I could not guide the agents because I could not evaluate what they were producing. The AI ran like the Flash down the path I chose; the wrong path. The only path forward was deliberate skill development before returning to the AI-assisted work.

That is not a failure of AI. It is the principle operating correctly.

The Novice Is Not Helpless

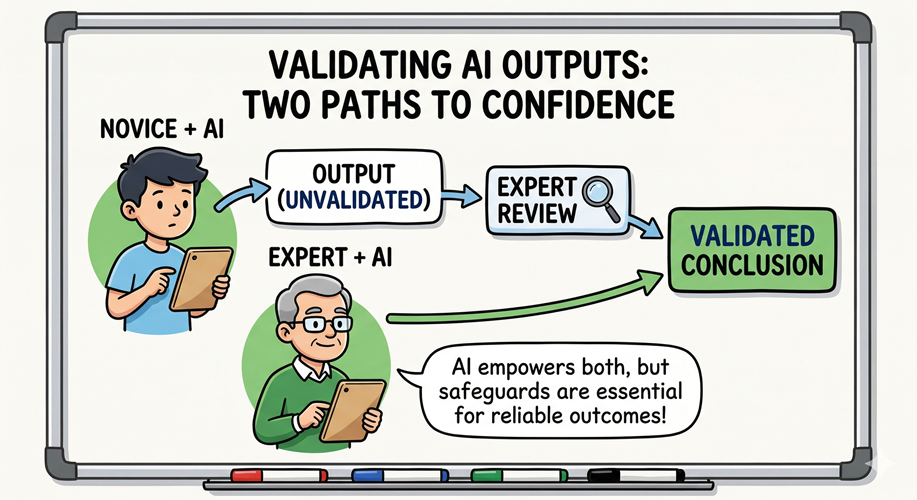

None of this means novices should avoid AI in unfamiliar domains. The constraint is not experimentation; it is unchecked trust in the output.

The safeguard is structured skepticism combined with expert review. Use AI to explore and accelerate learning in new areas; treat every output as a hypothesis, not a conclusion. Then have someone with genuine expertise review the work before it is acted upon. The novice cannot self-validate, but they can route their output through someone who can.

This is not a workaround. It is how professional practice works in every high-stakes domain. Peer review, code review, legal review, and design critique are mechanisms that exist precisely because the person closest to the work is often the last to recognize its flaws. AI makes that principle more urgent, not less.

Where This Sits in the System

This principle is downstream of Principle 2 (Trust Nothing, Verify Everything) and upstream of Principle 3 (You Own the Output). The chain is load-bearing. If I had planned better, I would have ordered this series differently.

You cannot verify what you cannot evaluate. You cannot evaluate what you do not understand. Which means the human accountability in Principle 3, the rule that your name is on whatever the AI produces, requires that the human have sufficient expertise to exercise genuine judgment, not just nominal oversight.

Deloitte’s 2026 analysis of human-AI collaboration frames the opportunity as amplification, not automation: using technology to enhance uniquely human strengths; judgment, creativity, ethical control. That framing is correct, but it carries a constraint that often goes unstated. You cannot amplify what is not there. The opportunity scales with the expertise you bring.

The practical implication for engineering leaders: your AI strategy is only as strong as the domain expertise behind it. Deploying AI agents in areas where your team lacks the judgment to audit their decisions is not acceleration. It is risk transferred to a system that cannot recognize it.

The Underlying Principle

AI is fast. AI is broad. AI is tireless.

None of those properties substitute for knowing what good looks like in your domain.

The ceiling on what AI can do for your team is set by the quality of human judgment directing it. Build the expertise first. Use AI to move faster once you have it. That ordering is not optional; it is the sequence the technology requires.

References

- AI as Cognitive Amplifier

- Recalibrating Academic Expertise in the Age of Generative AI

- Is AI Creating Incompetent Experts?

- Domain Expertise Still Wanted — Stack Overflow, March 2026

- Scaling Human-AI Collaboration — Deloitte 2026

- McKinsey — Trust in the Age of Agents

- TDWI — Role of HITL in AI Data Management

Leave a Reply