First Principles of AI Usage — Part 5

I asked an AI agent to build a procedurally generated terrain system for a Godot video game. I gave it a comprehensive requirements list. It built one. The code ran, the terrain rendered, and it was more effective to start over from scratch than to improve what it had produced.

The problem was not the model. The problem was the shape of the task.

Why Ambiguity Distributes

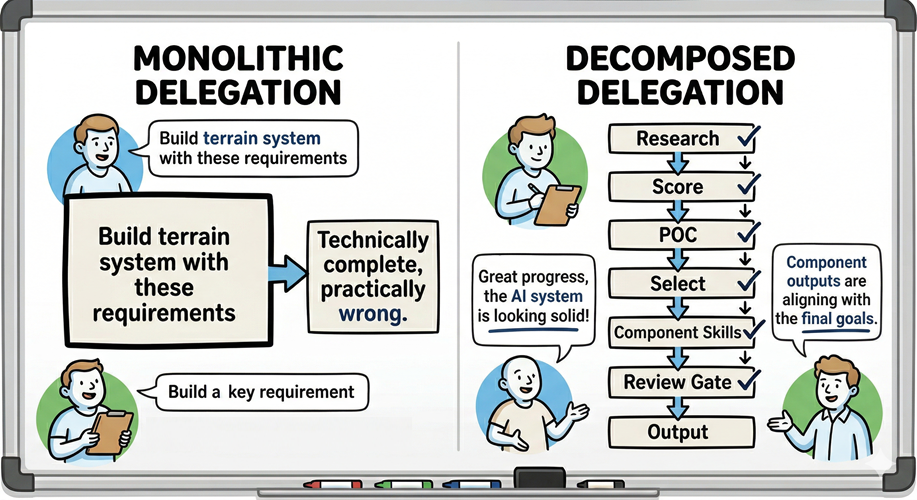

AI performs dramatically better on focused, well-scoped tasks than on ambiguous, multi-objective requests. This is not a model limitation; it is a structural one. When a task contains multiple objectives, implicit constraints, and undefined success criteria, the model has no mechanism to surface that ambiguity before acting. It distributes assumptions across the output. Some of those assumptions align with what you needed. Many do not. You receive something that is technically complete and practically wrong.

O’Reilly’s Five Principles of Prompting codifies this as “Divide Labor”: decompose before you delegate. Agentic security literature extends it further, advocating for agents built around a single responsibility rather than an open mandate. The same constraint discipline that produced microservices architecture now applies to AI systems. One agent, one task. One context window, one objective. The principle does not change because the worker is a language model.

The Difference in Practice

After the terrain failure, I approached 3D mesh generation for the same project differently. The process began with a research phase: AI surfaced the leading methodologies for mesh generation in Godot. It then scored each methodology against the project’s constraints, the available tooling, and the capabilities of the agent that would execute the work. Three agent instances ran parallel proof of concepts. I reviewed all three manually; AI scored them against defined evaluation criteria. I iterated on the most promising approaches. Only after selecting a methodology did I decompose the process into major phases and build custom skills for a specialized technical-artist agent, with review gates at each handoff. Only then was the pipeline ready to attempt to produce even the first mesh that might enter the game.

The output quality difference was significant. The maintainability difference was more important.

Tweaking a monolith means touching everything to change one thing. Tweaking a decomposed process means updating the component that needs to change and leaving the rest intact. Each step evolves independently. The process itself becomes a durable asset rather than a fragile artifact.

Decomposition Enables Verification

There is a structural reason decomposition produces better outcomes beyond output quality: it makes verification possible at the outputs of atomic steps. This inspection and verification allows for tuning of the process before the issues compound and obfuscate the root cause for the poor quality.

A monolithic output must be evaluated as a whole. That evaluation is difficult to perform rigorously because the output is large, the criteria are diffuse, and there is no clean boundary between what is working and what is not. A decomposed output can be verified one component at a time. Each step has a defined objective and a defined output format. You confirm it is correct before it becomes input to the next step. Errors are caught at their origin rather than surfacing as symptoms three stages downstream.

Principle 2 — Trust Nothing, Verify Everything. Decomposition does not replace verification; it makes it executable.

Decomposition also enables calibrated oversight. Principle 4 — Calibrate Autonomy to Stakes. When a task is broken into discrete steps, each step can be assigned the appropriate level of human review based on its blast radius. A research synthesis step and a production deployment step require different gates. A monolithic task obscures that distinction entirely.

The Meta-Example

I use AI to write these blog posts. Not as a replacement for the thinking; as a structured process designed to extract it.

The workflow decomposes into distinct phases: deep research, personal research, writing, image definition, and image generation. Each phase has a defined scope and produces a defined output that the next phase builds on. The personal research phase uses AI to guide me through targeted questions designed to surface examples and insights from direct experience. The question that produced the Godot story in this post was generated by that phase.

The decomposed process does not replace the human value in the writing. It creates the conditions for that value to surface. AI operating on an open mandate produces what it predicts you want. AI operating inside a structured process produces what the process is designed to extract. That distinction is the entire argument.

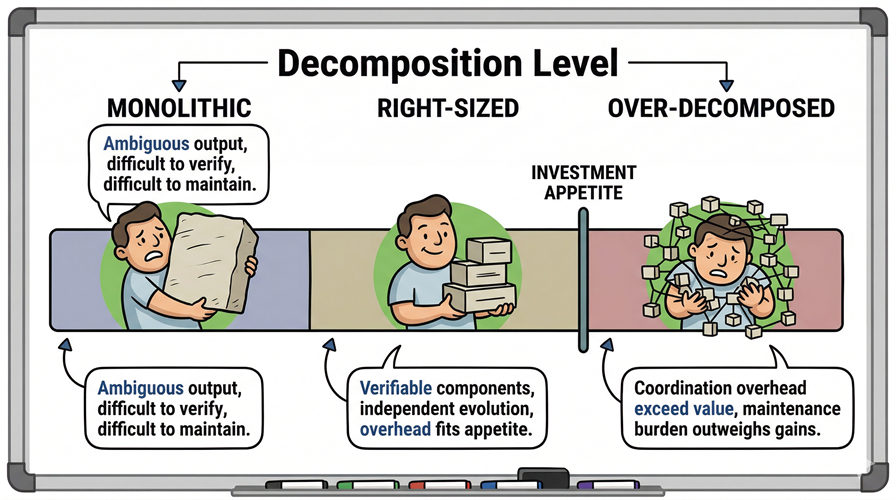

Investment Appetite and the Over-Decomposition Problem

There is a failure mode in the other direction. Excessive decomposition creates coordination overhead that eventually exceeds the value it produces. Every handoff between steps is a potential error surface. Every review gate is a human time cost. At some point, the process becomes larger than the problem it is solving.

The way to navigate this is to define your investment appetite before you decompose. Investment appetite is the total human effort you are willing to commit: the effort to execute the workflow, plus the effort to evolve and maintain it over time. Both components matter. A process that runs well once but requires significant rework for each new use case carries a higher long-term cost than it appears at the start.

Decompose until the process exceeds that appetite. Then back off until it fits. No process is correctly sized on the first attempt; iteration is necessary to right-size each major workflow. But starting with a defined appetite gives the decomposition a stopping condition. Without one, you will keep splitting tasks until the coordination overhead has consumed the efficiency gains.

The goal is not maximum decomposition. The goal is minimum decomposition sufficient to make the task verifiable, the output maintainable, and the process evolvable within the budget of effort you are actually prepared to sustain.

The Principle

Complex tasks handed to AI as a single unit produce outputs that are difficult to verify, difficult to improve, expensive to maintain, and overall lower quality. The same tasks broken into focused, well-scoped sub-tasks produce outputs that can be checked at each step, iterated independently, and evolved over time without rebuilding from scratch.

Decompose before you delegate. The work you invest in the structure is not overhead. It is the work.

Leave a Reply