First Principles of AI Usage — Part 7

Constraints make AI better. This is counterintuitive. Most teams assume that giving an AI system more access, more context, and more freedom produces better results. The opposite is true. An AI with clear boundaries, defined output formats, and explicit prohibitions produces more focused, higher-quality output than one given unlimited freedom. The same principle that information security has enforced for decades applies directly: give the system access only to what it needs, and constrain its action space to what is appropriate.

That is Principle 7. Least privilege is a security discipline. Maximum constraint is a performance strategy. Together they form a single design philosophy for AI systems that are both safer and more effective.

The Security Case Is Settled

Least privilege is not a novel idea. Every infrastructure team enforces it for human operators: scoped IAM roles, short-lived credentials, just-in-time access provisioning. AI agents deserve the same treatment, and the industry has converged on this position.

The OWASP AI Agent Security Cheat Sheet codifies least privilege as a foundational control. AWS Well-Architected guidance for generative AI dedicates an entire best practice to implementing least-privilege access for agentic workflows. Microsoft’s 2026 security priorities include Conditional Access policies that block risky agents and enforce just-in-time access to resources. The consensus is clear.

The implementation pattern emerging in practice is the gateway model: a mediation layer that sits between AI agents and infrastructure APIs, ensuring every request is scoped to minimum required permissions. The agent never touches the API directly. The gateway enforces the boundary. This mirrors how mature organizations already manage service-to-service communication; the only difference is that the caller is now an AI system rather than a microservice.

BeyondTrust frames the problem precisely: AI does not create privilege sprawl, but it amplifies it. An engineer with overly broad permissions makes one mistake at a time. An AI agent with overly broad permissions makes mistakes at machine speed, across every action in its execution loop. The blast radius scales with the clock speed of the actor.

The Performance Case Is Underappreciated

Security gets the headlines. The performance argument is less obvious and more important for day-to-day AI effectiveness.

Narrower scope leads to more focused output. OpenAI’s prompt engineering guidance explicitly recommends constraining the model’s action space: define output formats, specify what is out of scope, and set explicit prohibitions. O’Reilly’s Five Principles of Prompting codifies this as a core discipline. The research is consistent: when you tell an AI what it cannot do, what it does do improves.

This applies identically in agentic contexts. An agent with access to ten APIs will attempt to use whichever one its reasoning selects, regardless of whether that selection is optimal or even safe. An agent with access to the two APIs its task requires cannot make that category of error. You have not reduced its intelligence. You have reduced its opportunity to be wrong.

The same logic applies to context. Pasting an entire codebase into a conversation when the AI only needs one function does not help. It introduces noise that degrades the signal. Least privilege is not just about permissions; it is about information discipline.

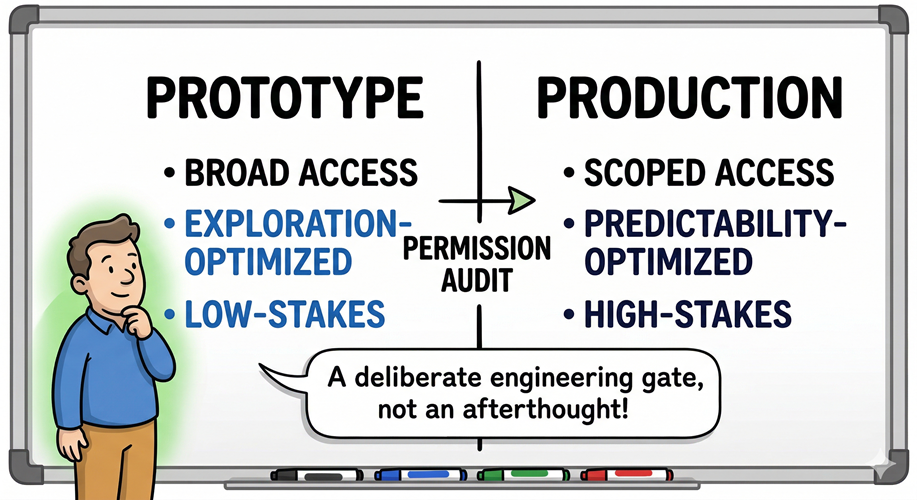

The Prototype-to-Production Gap

Here is where engineering leaders encounter the real friction. In prototyping, broad permissions are a feature. You do not yet know the full scope of what the AI will need. Constraining too early kills exploration. The problem is not the prototype. The problem is the prototype that ships.

Moving from prototype to production requires a permissions audit that most teams treat as overhead rather than design work. What did the agent actually use? Which APIs did it call? What data did it access? The answers define the minimum viable permission set. Everything else gets revoked.

This is unglamorous work. It slows the path to production. It is also the difference between a system you can defend and a system you are hoping nothing goes wrong with. The constraint boundary is not optional infrastructure. It is the production boundary.

The Trust Equation

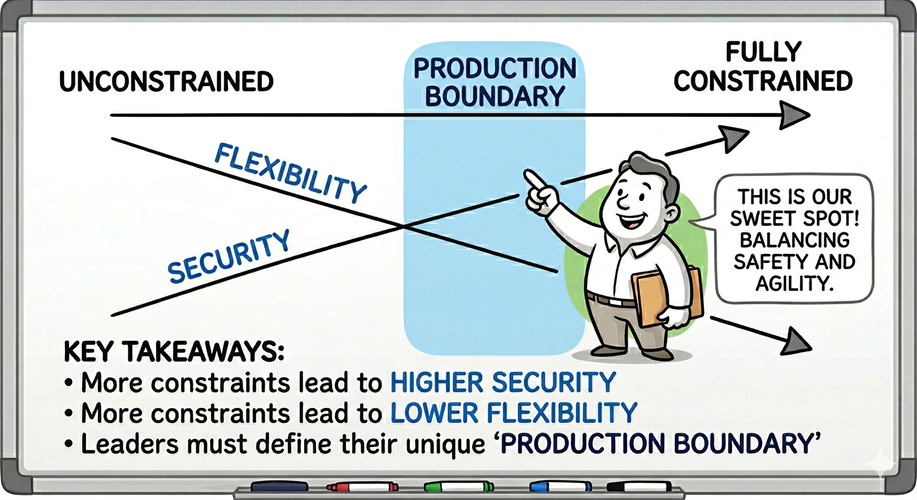

Constraints create a three-variable tradeoff that engineering leaders need to navigate honestly.

More constraints produce more trust. When stakeholders can see that an AI agent operates within defined boundaries, with scoped permissions and auditable actions, adoption resistance drops. The constraint is not a limitation you apologize for. It is the mechanism by which the organization grants the system authority.

More constraints slow speed to market. Defining narrow permissions, building gateway layers, scoping tool access per task; this is engineering effort that does not ship features. The investment is real.

More constraints accelerate adoption velocity, if the constraints are visible. This is the variable most teams miss. A well-constrained system that publicizes its constraints earns organizational trust faster than an unconstrained system that produces good results quietly. People adopt what they understand the boundaries of.

The calculus is straightforward. Pay the constraint cost once at the production boundary. Earn the trust dividend continuously.

Where Constraints Break Down

Constraining inherently reduces flexibility. This is the honest caveat.

If an AI agent operates through narrow, predefined API calls, every new use case requires engineering effort to expand its capabilities. The agent cannot reason its way to a novel solution if the permission model prevents it from reaching the tools that solution requires. You have traded adaptability for predictability.

This tradeoff is acceptable in production systems where the action space is known and the stakes justify the rigidity. It is counterproductive in exploratory contexts where the value of AI is precisely its ability to find unexpected connections across a broad information surface.

The principle is not “constrain everything.” The principle is that the level of constraint should match the context. Prototypes get broad access because the cost of exploration is low. Production systems get narrow access because the cost of unexpected behavior is high. The boundary between the two is the engineering decision that matters.

The Design Question

Least privilege is a solved problem at the infrastructure layer. Your team already enforces it for human operators, for service accounts, for CI/CD pipelines. The question is not whether to apply it to AI systems. The question is whether you will do so deliberately, at the production boundary, or retroactively, after an agent with broad permissions demonstrates why the boundary was needed.

Constraints do not limit AI. They define the space in which AI earns trust.

References

- OWASP AI Agent Security Cheat Sheet

- AWS Well-Architected Generative AI Lens GENSEC05-BP01

- BeyondTrust — AI Agent Identity Governance & Least Privilege

- Strata — 8 Strategies for AI Agent Security

- OpenAI — Prompt Engineering Guide

- O’Reilly — Five Principles of Prompting

- Microsoft Security Blog — AI Identity & Access Priorities 2026

- InfoQ — Building a Least-Privilege AI Agent Gateway

- IBM — AI Agent Security Best Practices

- CIO — Taming AI Agents: The Autonomous Workforce of 2026

Leave a Reply